ai/qwen3.6-safetensors

Multimodal LLM with 35B parameters for coding, agentic tasks, and vision-language understanding

4.2K

ai/qwen3.6-safetensors repository overview

Qwen3.6-35B-A3B

Qwen3.6-35B-A3B is a multimodal large language model developed by Qwen (Alibaba Cloud) that combines vision and language understanding with advanced reasoning capabilities. Built on direct feedback from the community, this model prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

Following the February 2025 release of the Qwen3.5 series, Qwen3.6 represents the first open-weight variant with substantial upgrades in agentic coding and thinking preservation. The model now handles frontend workflows and repository-level reasoning with greater fluency and precision. A key innovation is the introduction of a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

With 35 billion total parameters and 3 billion activated parameters through its Mixture of Experts architecture, Qwen3.6-35B-A3B delivers state-of-the-art performance across coding benchmarks, agent tasks, multimodal understanding, and general reasoning while maintaining efficient inference characteristics.

Characteristics

| Attribute | Value |

|---|---|

| Provider | Qwen (Alibaba Cloud) |

| Architecture | Qwen3_5MoeForConditionalGeneration (Mixture of Experts) |

| Languages | English, Chinese, and multilingual |

| Input modalities | Text, Image, Video |

| Output modalities | Text |

| License | Apache 2.0 |

| Context Length | 262,144 tokens (natively), extensible to 1,010,000 tokens |

| Parameters | 35B total, 3B activated |

Using this model with Docker Model Runner

docker model run qwen3.6-safetensors

For more information, check out the Docker Model Runner docs.

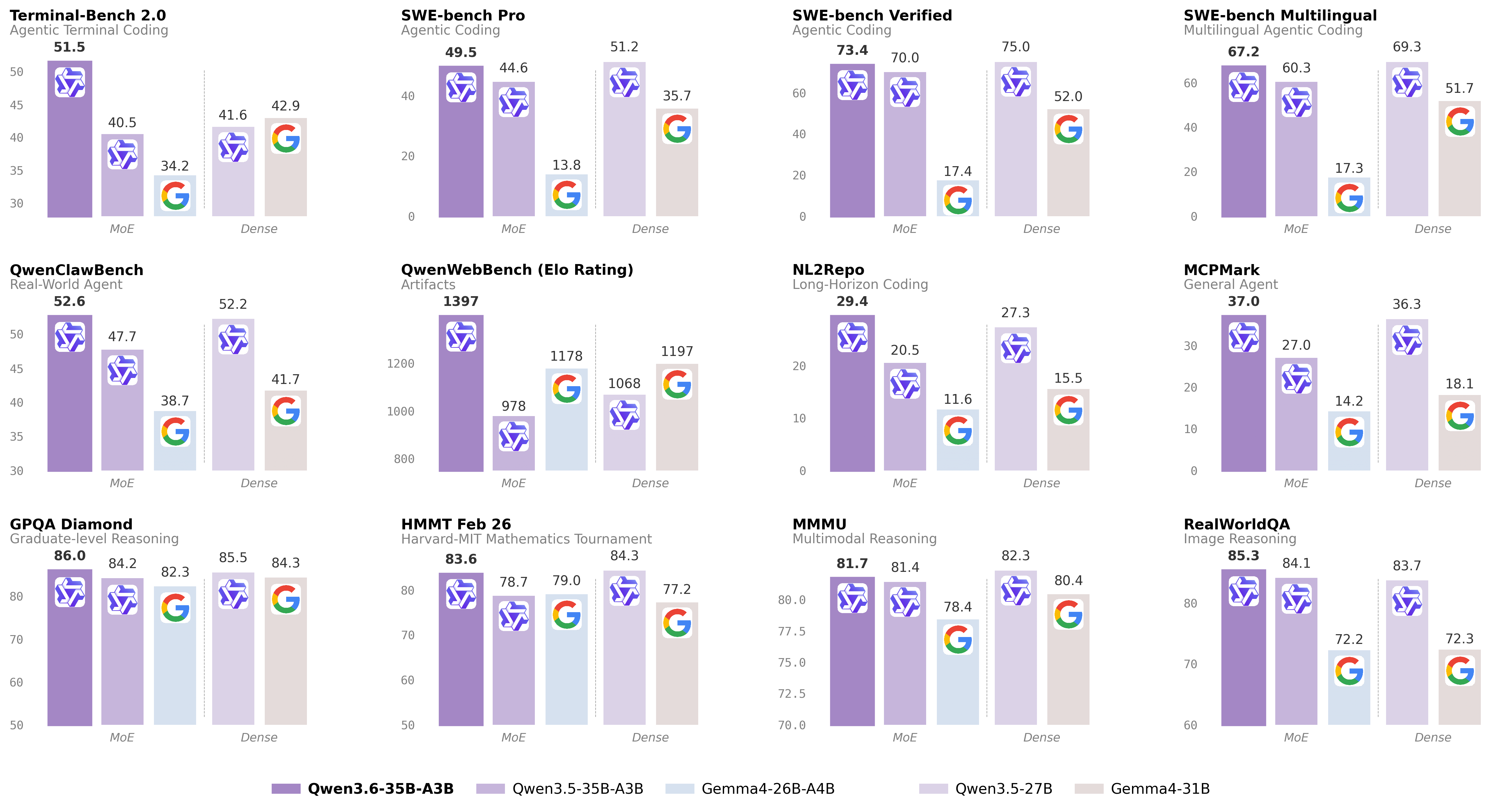

Benchmarks

Coding Agent Benchmarks

| Benchmark | Qwen3.5-27B | Gemma4-31B | Qwen3.5-35BA3B | Gemma4-26BA4B | Qwen3.6-35BA3B |

|---|---|---|---|---|---|

| SWE-bench Verified | 75.0 | 52.0 | 70.0 | 17.4 | 73.4 |

| SWE-bench Multilingual | 69.3 | 51.7 | 60.3 | 17.3 | 67.2 |

| SWE-bench Pro | 51.2 | 35.7 | 44.6 | 13.8 | 49.5 |

| Terminal-Bench 2.0 | 41.6 | 42.9 | 40.5 | 34.2 | 51.5 |

| Claw-Eval (Avg) | 64.3 | 48.5 | 65.4 | 58.8 | 68.7 |

| Claw-Eval (Pass^3) | 46.2 | 25.0 | 51.0 | 28.0 | 50.0 |

| SkillsBench (Avg5) | 27.2 | 23.6 | 4.4 | 12.3 | 28.7 |

| QwenClawBench | 52.2 | 41.7 | 47.7 | 38.7 | 52.6 |

| NL2Repo | 27.3 | 15.5 | 20.5 | 11.6 | 29.4 |

| QwenWebBench | 1068 | 1197 | 978 | 1178 | 1397 |

General Agent Benchmarks

| Benchmark | Qwen3.5-27B | Gemma4-31B | Qwen3.5-35BA3B | Gemma4-26BA4B | Qwen3.6-35BA3B |

|---|---|---|---|---|---|

| TAU3-Bench | 68.4 | 67.5 | 68.9 | 59.0 | 67.2 |

| VITA-Bench | 41.8 | 43.0 | 29.1 | 36.9 | 35.6 |

| DeepPlanning | 22.6 | 24.0 | 22.8 | 16.2 | 25.9 |

| Tool Decathlon | 31.5 | 21.2 | 28.7 | 12.0 | 26.9 |

| MCPMark | 36.3 | 18.1 | 27.0 | 14.2 | 37.0 |

| MCP-Atlas | 68.4 | 57.2 | 62.4 | 50.0 | 62.8 |

| WideSearch | 66.4 | 35.2 | 59.1 | 38.3 | 60.1 |

Knowledge Benchmarks

| Benchmark | Qwen3.5-27B | Gemma4-31B | Qwen3.5-35BA3B | Gemma4-26BA4B | Qwen3.6-35BA3B |

|---|---|---|---|---|---|

| MMLU-Pro | 86.1 | 85.2 | 85.3 | 82.6 | 85.2 |

| MMLU-Redux | 93.2 | 93.7 | 93.3 | 92.7 | 93.3 |

| SuperGPQA | 65.6 | 65.7 | 63.4 | 61.4 | 64.7 |

| C-Eval | 90.5 | 82.6 | 90.2 | 82.5 | 90.0 |

STEM & Reasoning Benchmarks

| Benchmark | Qwen3.5-27B | Gemma4-31B | Qwen3.5-35BA3B | Gemma4-26BA4B | Qwen3.6-35BA3B |

|---|---|---|---|---|---|

| GPQA | 85.5 | 84.3 | 84.2 | 82.3 | 86.0 |

| HLE | 24.3 | 19.5 | 22.4 | 8.7 | 21.4 |

| LiveCodeBench v6 | 80.7 | 80.0 | 74.6 | 77.1 | 80.4 |

| HMMT Feb 25 | 92.0 | 88.7 | 89.0 | 91.7 | 90.7 |

| HMMT Nov 25 | 89.8 | 87.5 | 89.2 | 87.5 | 89.1 |

| HMMT Feb 26 | 84.3 | 77.2 | 78.7 | 79.0 | 83.6 |

| IMOAnswerBench | 79.9 | 74.5 | 76.8 | 74.3 | 78.9 |

| AIME 26 | 92.6 | 89.2 | 91.0 | 88.3 | 92.7 |

Vision-Language Benchmarks

| Benchmark | Qwen3.5-27B | Claude-Sonnet-4.5 | Gemma4-31B | Gemma4-26BA4B | Qwen3.5-35B-A3B | Qwen3.6-35B-A3B |

|---|---|---|---|---|---|---|

| MMMU | 82.3 | 79.6 | 80.4 | 78.4 | 81.4 | 81.7 |

| MMMU-Pro | 75.0 | 68.4 | 76.9 | 73.8 | 75.1 | 75.3 |

| MathVista (mini) | 87.8 | 79.8 | 79.3 | 79.4 | 86.2 | 86.4 |

| ZEROBench_sub | 36.2 | 26.3 | 26.0 | 26.3 | 34.1 | 34.4 |

| RealWorldQA | 83.7 | 70.3 | 79.3 | 75.6 | 81.9 | 82.1 |

Model Architecture

Qwen3.6-35B-A3B features an advanced Mixture of Experts (MoE) architecture:

- Total Parameters: 35B with 3B activated per token

- Hidden Dimension: 2048

- Number of Layers: 40

- Hidden Layout: 10 × (3 × (Gated DeltaNet → MoE) → 1 × (Gated Attention → MoE))

- Gated DeltaNet:

- Number of Linear Attention Heads: 32 for V and 16 for QK

- Head Dimension: 128

- Gated Attention:

- Number of Attention Heads: 16 for Q and 2 for KV

- Head Dimension: 256

- Rotary Position Embedding Dimension: 64

- Mixture of Experts:

- Number of Experts: 256

- Number of Activated Experts: 8 Routed + 1 Shared

- Expert Intermediate Dimension: 512

- Token Embedding: 248,320 (Padded)

- Context Length: 262,144 tokens natively, extensible to 1,010,000 tokens

- Multi-Token Prediction (MTP): Trained with multi-step prediction

Key Features

Agentic Coding

Qwen3.6 excels at handling frontend workflows and repository-level reasoning with greater fluency and precision. The model demonstrates state-of-the-art performance on coding agent benchmarks including SWE-bench, Terminal-Bench, and various frontend development tasks.

Thinking Preservation

A new option to retain reasoning context from historical messages enables more coherent multi-turn interactions, streamlining iterative development and reducing computational overhead.

Multimodal Understanding

Native support for image and video inputs alongside text, enabling comprehensive visual understanding tasks including document processing, chart analysis, and visual question answering.

Extended Context

With native support for 262K tokens and extensibility to over 1 million tokens, the model can process entire codebases, long documents, and complex multi-turn conversations.

Links

Considerations

- The model is optimized for coding and agent tasks, particularly excelling in repository-level operations and frontend development

- Multimodal capabilities enable processing of images and videos alongside text inputs

- Best performance is achieved with appropriate temperature settings (temp=1.0, top_p=0.95 for agent tasks; temp=0.6 for deterministic coding tasks)

- The Mixture of Experts architecture provides efficient inference by activating only 3B of the 35B parameters per token

- Extended context support (up to 1M tokens) requires appropriate memory allocation and may impact inference speed

- Tool calling and function execution capabilities are built-in, making it well-suited for agent applications

Generated by

This model card was automatically generated using cagent-action. Want to learn more about Docker Model Runner? Check out the project repository: https://github.com/docker/model-runner.

Tag summary

Content type

Model

Digest

sha256:16c6b3d4c…

Size

67 GB

Last updated

17 days ago

docker model pull ai/qwen3.6-safetensors:35B-A3BThis week's pulls

Pulls:

1,639

Last week